On this article, we’ll speak about classical computation: the form of computation usually present in an undergraduate Pc Science course on Algorithms and Knowledge Constructions [1]. Suppose shortest path-finding, sorting, intelligent methods to interrupt issues down into easier issues, unimaginable methods to organise knowledge for environment friendly retrieval and updates. In fact, given The Gradient’s give attention to Synthetic Intelligence, we is not going to cease there; we may even examine learn how to seize such computation with deep neural networks.

Why seize classical computation?

Perhaps it’s value beginning by clarifying the place my vested curiosity in classical computation comes from. Aggressive programming—the artwork of fixing issues by quickly writing packages that have to terminate in a given period of time, and inside sure reminiscence constraints—was a extremely standard exercise in my secondary faculty. For me, it was actually the gateway into Pc Science, and I belief the story is analogous for a lot of machine studying practitioners and researchers immediately. I’ve been in a position to win a number of medals at worldwide programming competitions, such because the Northwestern Europe Regionals of the ACM-ICPC, the top-tier Pc Science competitors for college college students. Hopefully, my successes in aggressive programming additionally give me some credentials to write down about this subject.

Whereas this could clarify why I care about classical computation, why ought to all of us care? To reach at this reply, allow us to ponder a few of the key properties that classical algorithms have:

- They’re provably appropriate, and we are able to usually have robust ensures in regards to the sources (time or reminiscence) required for the computation to terminate.

- They provide robust generalisation: whereas algorithms are sometimes devised by observing a number of small-scale instance inputs, as soon as applied, they are going to work with out fault on inputs which might be considerably bigger, or distributionally completely different than such examples.

- By design, they’re interpretable and compositional: their (pseudo)code illustration makes it a lot simpler to motive about what the computation is definitely doing, and one can simply recompose varied computations collectively via subroutines to realize completely different capabilities.

all of those properties taken collectively, they appear to be precisely the problems that plague fashionable deep neural networks essentially the most: you’ll be able to hardly ever assure their accuracy, they usually collapse on out-of-distribution inputs, and they’re very infamous as black containers, with compounding errors that may hinder compositionality.

Nevertheless, it’s precisely these expertise which might be vital for making AI instructive and helpful to people! For instance, to have an AI system that reliably and instructively teaches an idea to a human, the standard of its output mustn’t depend upon minor particulars of the enter, and it ought to be capable to generalise that idea to novel conditions. Arguably, these expertise are additionally a lacking key step on the street to constructing generally-intelligent brokers. Subsequently, if we’re in a position to make any strides in the direction of capturing traits of classical computation in deep neural networks, that is prone to be a really fruitful pursuit.

First impressions: Algorithmic alignment

My journey into the sector began with an fascinating, however seemingly inconsequential query:

Can neural networks study to execute classical algorithms?

This may be seen as a great way to benchmark to what extent are sure neural networks able to behaving algorithmically; arguably, if a system can produce the outputs of a sure computation, it has “captured” it. Additional, studying to execute gives a uniquely well-built setting for evaluating machine studying fashions:

- An infinite knowledge supply—as we are able to generate arbitrary quantities of inputs;

- They require complicated knowledge manipulation—making it a difficult activity for deep studying;

- They’ve a clearly specified goal perform—simplifying interpretability analyses.

Once we began learning this space in 2019, we actually didn’t suppose extra of it than a really neat benchmark—however it was actually turning into a really vigorous space. Concurrently with our efforts, a staff from MIT tried tackling a extra bold, however strongly associated drawback:

What makes a neural community higher (or worse) at becoming sure (algorithmic) duties?

The landmark paper, “What Can Neural Networks Cause About?” [2] established a mathematical basis for why an structure is likely to be higher for a activity (when it comes to pattern complexity: the variety of coaching examples wanted to scale back validation loss under epsilon). The authors’ principal theorem states that higher algorithmic alignment results in higher generalisation. Rigorously defining algorithmic alignment is out of scope of this textual content, however it may be very intuitively visualised:

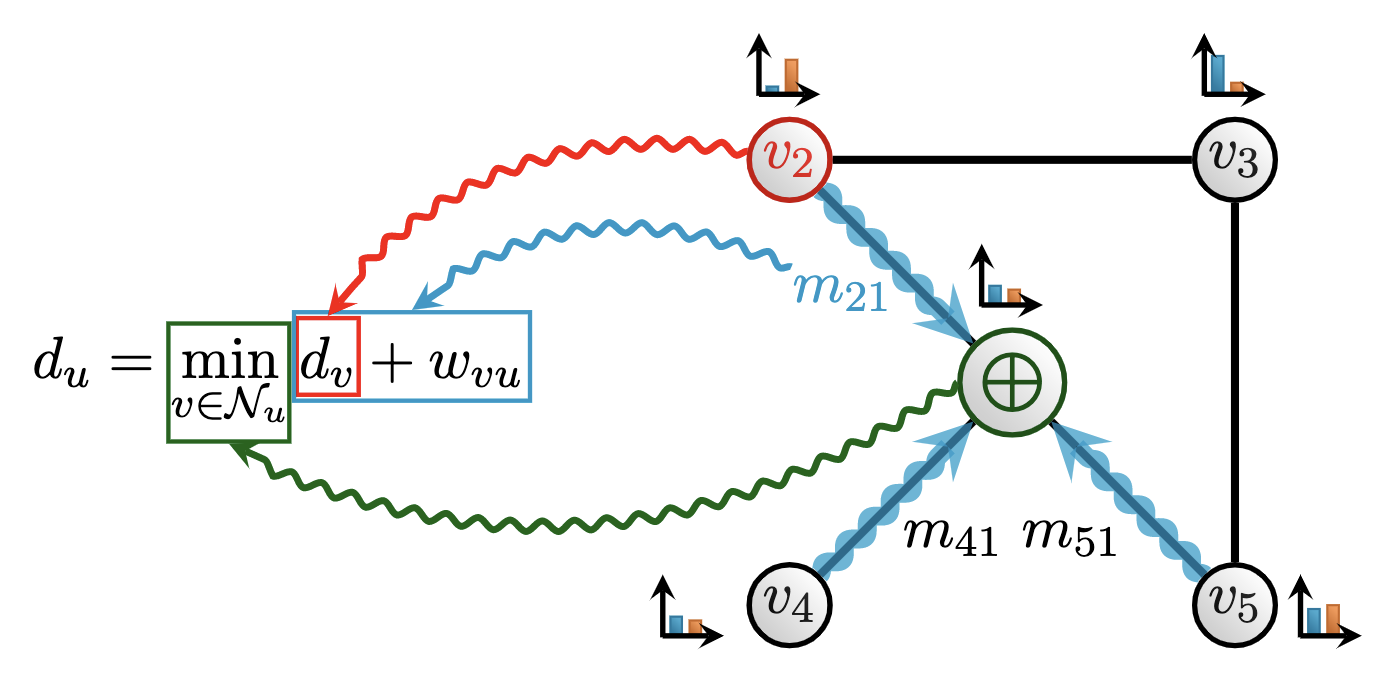

Right here, we are able to see a visible decomposition of how a graph neural community (GNN) [3] aligns with the classical Bellman-Ford [4] algorithm for shortest path-finding. Particularly, Bellman-Ford maintains its present estimate of how far-off every node is from the supply node: a distance variable (du) for every node u in a graph. At each step, for each neighbour v of node u, an replace to du is proposed: a mixture of (optimally) reaching v, after which traversing the sting connecting v and u (du + wvu). The space variable is then up to date because the optimum out of all of the proposals. Operations of a graph neural community can naturally decompose to comply with the info movement of Bellman-Ford:

- The space variables correspond to the node options maintained by the GNN;

- Including the sting distance to a distance variable corresponds to computing the GNN’s message perform;

- Selecting the optimum neighbour primarily based on this measure corresponds to the GNN’s permutation-invariant aggregation perform, ⨁.

Typically, it may be confirmed that, the extra carefully we are able to decompose our neural community to comply with the algorithm’s construction, the extra beneficial pattern complexity we are able to count on when studying to execute such algorithms. Bellman-Ford is a typical occasion of a dynamic programming (DP) algorithm [5], a general-purpose problem-solving technique that breaks the issue down into subproblems, after which recombines their options to search out the ultimate resolution.

The MIT staff made the vital statement that GNNs seem to algorithmically align with DP, and since DP can itself be used to precise many helpful types of classical computation, GNNs needs to be a really potent general-purpose mannequin for studying to execute. This was validated by a number of fastidiously constructed DP execution benchmarks, the place relational fashions like GNNs clearly outperformed architectures with weaker inductive biases. GNNs have been a long-standing curiosity of mine [6], so the time was proper to launch our personal contribution to the world:

Neural Execution of Graph Algorithms

On this paper [7], concurrently launched with Xu et al. [2], we carried out an intensive empirical evaluation of studying to execute with GNNs. We discovered that whereas algorithmic alignment is certainly a robust instrument for mannequin class choice, it sadly doesn’t permit us to be reckless. Particularly, we can not simply apply any expressive GNN to an algorithmic execution activity and count on nice outcomes, particularly out-of-distribution—which we beforehand recognized as a key setting by which “true” reasoning techniques ought to carry out effectively. Very like different neural networks, GNNs can very simply overfit to the traits of the distribution of coaching inputs, studying “intelligent hacks” and sidestepping the precise process that they’re making an attempt to execute.

We therefore determine three key observations on cautious inductive biases to make use of, to enhance the algorithmic alignment to sure path-finding issues even additional and permit for generalising to 5x bigger inputs at check time:

- Most conventional deep studying setups contain a stack of layers with unshared parameters. This essentially limits the quantity of computation the neural community can carry out: if, at check time, an enter a lot bigger than those within the coaching knowledge arrives, it might be anticipated that extra computational steps are wanted—but, the unshared GNN has no approach to assist that. To handle this, we undertake the encode-process-decode paradigm [8]: a single shared processor GNN is iterated for a lot of steps, and this variety of steps may be variable at each coaching and inference time. Such an structure additionally permits a neat approach to algorithmically align to iterative computation, as most algorithms contain repeatedly making use of a sure computation till convergence.

- Since most path-finding algorithms (together with Bellman-Ford) require “native” optimisation (i.e. selecting precisely one optimum neighbour at each step), we opted to make use of max aggregation to mix the messages despatched in GNNs. Whereas this may occasionally appear to be a really intuitive concept, it went strongly towards the folklore of the instances, as max-GNNs had been recognized to be theoretically inferior to sum-GNNs at distinguishing non-isomorphic graphs [9]. (We now have strong theoretical proof [10] for why it is a good concept.)

- Lastly, whereas most deep studying setups solely require producing an output given an enter, we discovered that this misses out on a wealth of the way to instruct the mannequin to align to the algorithm. For instance, there are various fascinating invariants that algorithms have that may be explicitly taught to a GNN. Within the case of Bellman-Ford, after ok iterations are executed, we needs to be anticipated to have the ability to get better all shortest paths which might be not more than ok hops away from the supply node. Accordingly, we use this perception to supply step-wise supervision to the GNN at each step. This concept seems to be gaining traction in Massive Language Mannequin design in current months [11, 12].

All three of the above modifications make for stronger algorithmically-aligned GNNs.

Enjoying the alignment recreation

It have to be confused that the intuitive concept of algorithmic alignment—taking inspiration from Pc Science ideas in structure design—is under no circumstances novel! The text-book instance of this are the neural Turing machine (NTM) and differentiable neural pc (DNC) [13, 14]. These works are decisively influential; in truth, in its try to make random-access reminiscence suitable with gradient-based optimisation, the NTM gave us one of many earliest types of content-based consideration, three years earlier than Transformers [15]!

Nevertheless, regardless of their affect, these architectures are these days just about by no means utilized in apply. There are a lot of attainable causes for this, however for my part it’s as a result of their design was too brittle: attempting to introduce many differentiable parts into the identical structure without delay, and not using a clear steering on how to compose them, or a approach to simply debug them as soon as they failed to point out helpful sign on a brand new activity. Our line of labor nonetheless needs to construct one thing like an NTM—however make it extra efficiently deployed, through the use of algorithmic alignment to extra fastidiously prototype every constructing block in isolation, and have a extra granular view of which blocks profit execution of which goal algorithms.

Our method of “enjoying the algorithmic alignment recreation” seems to have yielded a profitable line of specialized (G)NNs, and we now have worthy ‘fine-grained’ options for studying to execute linearithmic sequence algorithms [16], iterative algorithms [17], pointer-based knowledge buildings [18], in addition to persistent auxiliary reminiscence [19]. Finally, these insights additionally carried over to extra fine-grained concept as effectively. In gentle of our NEGA paper [7], the inventors of algorithmic alignment refined their concept into what’s now referred to as linear algorithmic alignment [10], offering justification for, amongst different issues, our use of the max aggregation. Newer insights present that understanding algorithmic alignment might require causal reasoning [20,21], correctly formalising it might require class concept [22], and correctly describing it might require analysing asynchronous computation [23]. Algorithmic alignment is subsequently turning into a really thrilling space for mathematical approaches to deep studying lately.

Why not simply run the goal algorithm?…and rebuttals

Whereas it seems numerous helpful progress has been made in the direction of addressing our preliminary “toy query”, the thought of studying to execute will not be one which simply passes peer evaluate. My private favorite reviewer remark I obtained—quoted in full—was as follows: “This paper will definitely speed up analysis in constructing algorithmic fashions, and there’s actually numerous researchers that will make benefit of it, I’m simply unsure that this analysis ought to even exist”.

That is clearly not the nicest factor to obtain as the primary creator of a paper. However nonetheless, let’s attempt to put my ego apart, and see what may be taken away from such evaluations. There’s a clear sense by which such evaluations are elevating a wholly legitimate level: tautologically, the goal algorithm will execute itself higher (or equally good) than any GNN we’d ever study over it. Clearly, if we would like wide-spread recognition of those concepts, we have to present how studying to execute may be usefully utilized past the context of “pure” execution.

Our exploration led us to many attainable concepts, and within the the rest of this text, I’ll present three concepts that noticed essentially the most influence.

First, algorithmically aligned fashions can speed up science. And if you would like clear proof of this, look no additional than the quilt of Nature [24]. In our work, we practice (G)NNs to suit a mathematical dataset of curiosity to a mathematician, after which use easy gradient saliency strategies to sign to the mathematician which elements of the inputs to focus their consideration at. Whereas such indicators are sometimes remarkably noisy, they do permit a mathematician to review solely essentially the most salient 20-30 nodes in a graph that will in any other case have lots of or 1000’s of nodes, making sample discovery a lot simpler. The found patterns can then kind the premise of novel theorems, and/or be used to derive conjectures.

With this straightforward method, we had been in a position to drive unbiased contributions to 2 extremely disparate areas of math: knot concept [25] and illustration concept [26], each subsequently printed of their areas’ respective top-tier journals, therefore incomes us the Nature accolade. However, whereas our method is straightforward in precept, a query arose particularly within the illustration concept department: which (G)NN to make use of? Customary expressive GNNs didn’t yield clearly interpretable outcomes.

That is the place algorithmic alignment helped us: Geordie Williamson, our illustration concept collaborator, supplied an algorithm that will compute the outputs we care about, if we had entry to privileged info. We achieved our greatest outcomes with a GNN mannequin that was explicitly aligning its parts to this goal algorithm.

Extra typically: on this case, a goal algorithm existed, however executing it was inapplicable (resulting from privileged inputs). Algorithmic alignment allowed us to embed “priors” from it anyway.

Second, algorithmically aligned fashions are quick heuristics. In current work with pc networking and machine studying collaborators from ETH Zürich, we studied the applicability of neural algorithmic reasoning in pc networking [27]. Particularly, we sought to expedite the difficult activity of configuration synthesis: primarily based on a given specification of constraints a pc community ought to fulfill, produce a corresponding community configuration (a graph specifying the routers in a community and their connections). This configuration should fulfill all the specs, as soon as a community protocol has been executed over it.

Producing these configurations is thought to be a really difficult NP-hard drawback—in apply, it’s normally solved with sluggish SMT solvers, which may usually require doubly-exponential complexity. As an alternative, we select to make use of concepts from algorithmic reasoning to generate configurations by inverting the protocol (which may be seen as a graph algorithm). Particularly, we generate many random community configurations, execute the protocol over them, and acquire all true details in regards to the ensuing community to extract corresponding specs. This provides us all we have to generate a graph-based dataset from specs to configurations, and match an algorithmically-aligned GNN to this dataset.

Naturally, by advantage of simply requiring a ahead cross of a machine studying mannequin, this method is considerably sooner than SMT solvers at inference time: for sure configurations, now we have noticed over 490x speedup over the prior state-of-the-art. In fact, there is no such thing as a free lunch: the worth we pay for this speedup are occasional inaccuracies within the produced configurations at test-time. That being stated, on all of the related distributions we evaluated, the common variety of constraints happy has constantly been over 90%, which makes our methodology already relevant for downstream human-in-the-loop use—and it’s prone to speed up human designers, as fairly often, the preliminary configurations are unsatisfiable, that means SMT solvers will spend numerous effort solely to say a satisfying configuration can’t be discovered. Throughout this time, a quick ahead cross of a GNN might have allowed for a lot extra fast iteration.

Extra typically: on this case, a goal algorithm is simply being approximated, however such that it gives a quick and fairly correct heuristic, enabling fast human-in-the-loop iteration.

A core drawback with making use of classical algorithms

To set us up for the third and closing concept, let me pose a motivating activity: “Discover me the optimum path from A to B”. How do you reply to this immediate?

Chances are high, particularly for those who come from a theoretical Pc Science background like me, that you’ll reply to this query in a really singular means. Particularly, you’ll subtly assume that I’m offering you with a weighted graph and asking you for the shortest path between two particular vertices on this graph. We will then diligently apply our favorite path-finding algorithm (e.g. Dijkstra’s algorithm [28]) to resolve this question. I ought to spotlight that, not less than on the time of scripting this textual content, the scenario will not be very completely different with most of immediately’s state-of-the-art AI chatbots—when prompted with the above activity, whereas they are going to usually search additional info, they are going to promptly assume that there’s an enter weighted graph supplied!

Nevertheless, there’s nothing in my query that requires both of those assumptions to be true. Firstly, the real-world is usually extremely noisy and dynamic, and barely gives such abstractified inputs. For instance, the duty would possibly concern the optimum approach to journey between two locations in a real-world transportation community, which is a difficult routing drawback that depends on processing noisy, complicated knowledge to estimate real-time visitors speeds—a lesson I’ve personally learnt, after I labored on deploying GNNs inside Google Maps [29]. Secondly, why should “optimum” equal shortest? Within the context of routing visitors, and relying on the precise contexts and objectives, “optimum” might effectively imply most cost-efficient, least polluting, and so forth. All of those variations make an easy software of Dijkstra’s algorithm troublesome, and should in apply require a mixture of a number of algorithms.

Each of those points spotlight that we regularly have to make a difficult mapping between complicated real-world knowledge and an enter that will likely be applicable for operating a goal algorithm. Traditionally, this mapping is carried out by people, both manually or through specialised heuristics. This naturally invitations the next query: Can people ever hope to have the ability to manually discover the required mapping, basically? I’d argue that, not less than because the Fifties, we’ve recognized the reply to this query to be no. Straight quoting from the paper of Harris and Ross [30], which is likely one of the first accounts of the most movement drawback (via analysing railway networks):

The analysis of each railway system and particular person monitor capacities is, to a substantial extent, an artwork. The authors know of no examined mathematical mannequin or method that features all variations and imponderables that have to be weighed. Even when the person has been carefully related to the actual territory he’s evaluating, the ultimate reply, nonetheless correct, is basically one in all judgment and expertise.

Therefore, even extremely expert people usually have to make educated guesses when attaching a single scalar “capability” worth to every railway hyperlink—and this must be executed earlier than any movement algorithm may be executed over the enter community! Moreover, as evidenced by the next assertion from the current Amazon Final Mile Routing Analysis Problem [31],

…there stays an vital hole between theoretical route planning and real-life route execution that the majority optimization-based approaches can not bridge. This hole pertains to the truth that in real-life operations, the standard of a route will not be solely outlined by its theoretical size, length, or price however by a large number of things that have an effect on the extent to which drivers can successfully, safely, and conveniently execute the deliberate route beneath real-life circumstances.

Therefore, these issues stay related even within the high-stakes, large knowledge settings of immediately. This can be a elementary divide between classical algorithms and the real-world issues they had been initially designed to unravel! Satisfying the strict preconditions for making use of an algorithm might result in drastic lack of info from complicated, naturally-occurring inputs. Or, put merely:

It doesn’t matter if the algorithm is provably appropriate, if we execute it on the unsuitable inputs!

This difficulty will get considerably tricker if the info is partially observable, there are adversarial actors within the setting, and so forth. I need to stress that this isn’t a difficulty theoretical pc scientists are inclined to concern themselves with, and possibly for good motive! Focussing on the algorithms within the “abstractified” setting is already fairly difficult, and it has yielded a few of the most stunning computational routines which have considerably remodeled our lives. That being stated, if we wish to give “superpowers” to those routines and make them relevant means past the sorts of inputs they had been initially envisioned for, we have to discover some approach to bridge this divide. Our proposal, the neural algorithmic reasoning blueprint [32], goals to bridge this divide by neuralising the goal algorithm.

Neural Algorithmic Reasoning

Since a key limitation we recognized is the necessity for guide enter characteristic engineering, a great first level of assault might be to easily substitute the characteristic engineer with a neural community encoder. In spite of everything, changing characteristic engineering is how deep studying obtained its main breakthrough [33]! The encoder would study to foretell the inputs to the algorithm from the uncooked knowledge, after which we are able to execute the algorithm over these inputs to acquire the outputs we care about.

This sort of pipeline is remarkably standard [34]; in current instances, there have been seminal outcomes permitting for backpropagating via the encoder even when the algorithm itself is non-differentiable [35]. Nevertheless, it suffers from an algorithmic bottleneck drawback: particularly, it’s totally committing itself to the algorithm’s outputs [36]. That’s, if the inputs to the algorithm are poorly predicted by the encoder, we run into the identical difficulty as earlier than—the algorithm will give an ideal reply in an incorrect setting. Because the required inputs are normally scalar in nature (e.g. a single distance per fringe of the enter graph), the encoder is usually tasked with mapping the extraordinarily wealthy construction of actual world knowledge into solely a single quantity. This notably turns into a difficulty with low-data or partially-observable eventualities.

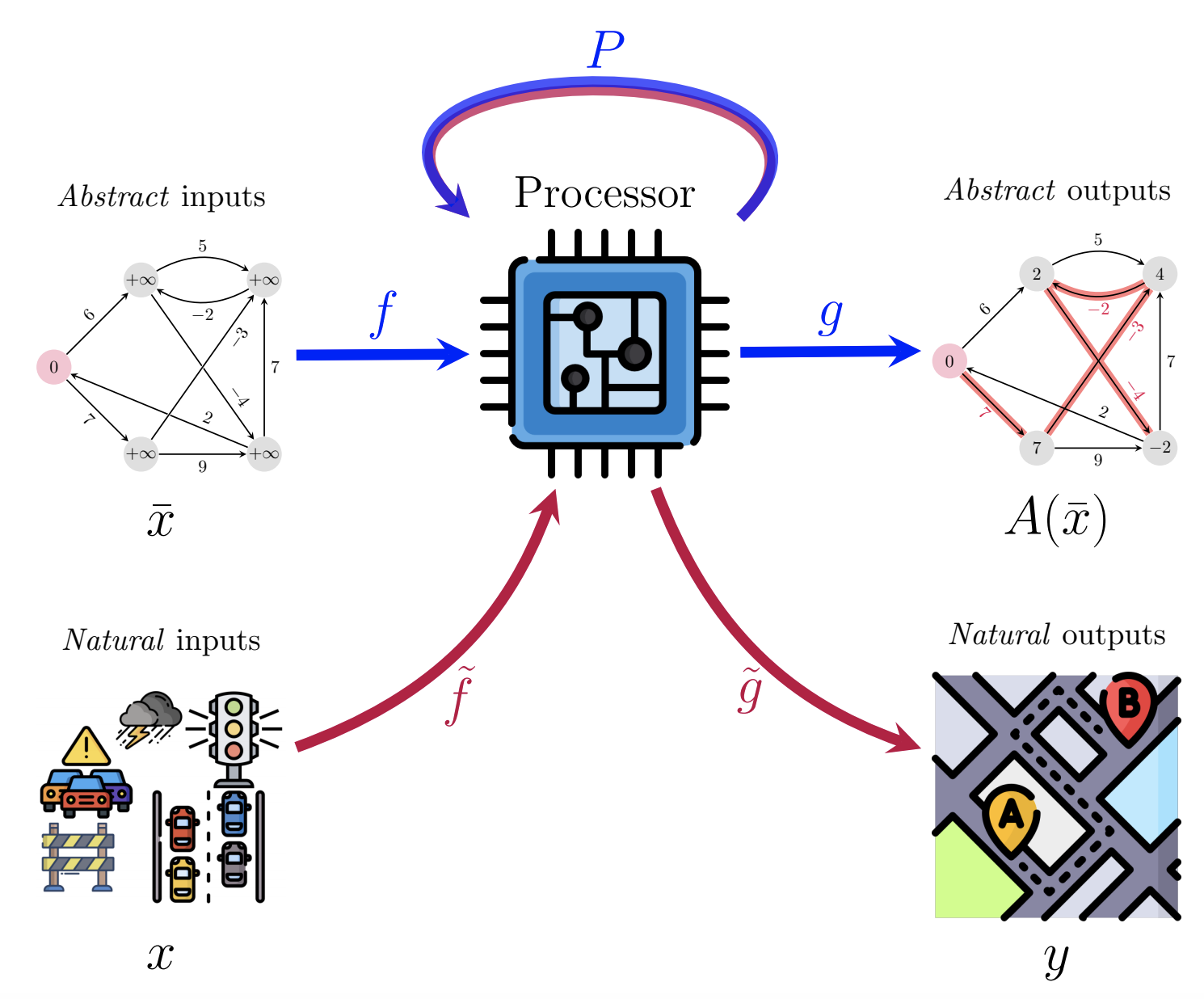

To interrupt the algorithmic bottleneck, we as an alternative decide to symbolize each the encoder and the algorithm as high-dimensional neural networks! Now, our algorithmic mannequin is a processor neural community—mapping excessive dimensional embeddings to excessive dimensional embeddings. To get better related outputs, we are able to then connect an applicable decoder community to the output embeddings of the processor. If we had been in a position to assure that this processor community “captures the computation” of the algorithm, then we’d concurrently resolve all the points beforehand recognized:

- Our pipeline could be an end-to-end differentiable neural community;

- It could even be high-dimensional all through, assuaging the algorithmic bottleneck;

- If any computation can’t be defined by the processor, we are able to add skip connections going immediately from the encoder to the decoder, to deal with such residual info.

So, all we’d like now’s to provide a neural community which captures computation! However, wait… that’s precisely what now we have been speaking about on this complete weblog publish! 🙂

We’ve got arrived on the neural algorithmic reasoning (NAR) blueprint [32]:

This determine represents a neat abstract of all the things now we have lined to this point: first, we receive an acceptable processor community, P, by algorithmic alignment to a goal algorithm, or pre-training on studying to execute the goal algorithm, or each! As soon as prepared, we embrace P into any neural pipeline we care about over uncooked (pure) knowledge. This enables us to use goal algorithms “on inputs beforehand thought-about inaccessible to them”, within the phrases of the unique NAR paper [32]. Relying on the circumstances, we might or might not want to hold P’s parameters frozen as soon as deployed, or P might even be solely nonparametric [37,38]!

Whereas initially solely a proposal, there are actually a number of profitable NAR cases which were printed at top-tier venues [36,39,40,41]. Within the most-recent such paper [41], we aimed to review learn how to classify blood vessels within the mouse mind—a really difficult graph activity spanning hundreds of thousands of nodes and edges [42]. Nevertheless, whereas it’s not trivial to immediately classify blood vessels from their options, it’s fairly protected to imagine that the principle function of blood vessels is to conduct blood movement—therefore, an algorithm for movement evaluation might be an acceptable one to deploy right here. Accordingly, we first pre-trained a NAR processor to execute the related maximum-flow and minimum-cut algorithms [43], then efficiently deployed it on the mind vessel graph, surpassing the earlier state-of-the-art GNNs. It’s worthy to notice that the mind vessel graph is 180,000x bigger than the artificial graphs we used for studying to execute, and minimal hyperparameter tuning was utilized all through! We’re assured this success is simply the primary of many.

The place can we get good processors from?

Whereas the concepts given in prior subsections hopefully present a great argument for the utility of capturing classical computation, in apply we nonetheless have to know which computation to seize! The entire NAR papers referenced above use goal algorithms extremely related to the downstream activity, and the processors are educated utilizing bespoke execution datasets constructed on prime of these algorithms. This usually requires each area experience and pc science experience, and therefore represents a transparent barrier of entry!

Since my beginnings in NAR, I’ve been strongly fascinated about lowering this barrier of entry, whereas additionally offering a set of “base processors” that needs to be helpful in all kinds of duties. This resulted in a two-year-long engineering effort, culminating by open-sourcing the CLRS Algorithmic Reasoning Benchmark (https://github.com/deepmind/clrs) [44].

The CLRS benchmark was impressed by the long-lasting Introduction to Algorithms (CLRS) textbook from Cormen, Leiserson, Rivest and Stein [1], which is likely one of the hottest undergraduate textbooks on classical algorithms and knowledge buildings, and a “bible” for aggressive programmers. Regardless of its many 1000’s of pages, it solely accommodates ~90 distinct algorithms, and these algorithms are inclined to kind the foundations behind complete careers in software program engineering! Therefore, the algorithms in CLRS can kind our desired strong set of “base processors”, and we got down to make it simple to assemble and practice NAR processors to execute the algorithms in CLRS.

In its first incarnation, we launched CLRS-30, a set of dataset and processor mills for thirty algorithms in CLRS, spanning all kinds of expertise: sorting, looking, dynamic programming, graph algorithms, string algorithms and geometric algorithms.

What makes CLRS-30 particular is the wide range of knowledge, fashions and pipelines it exposes to the person: given an applicable specification of the goal algorithm’s variables, an implementation of the goal algorithm, and a fascinating random enter sampler, CLRS will robotically produce the total execution trajectories of this algorithm’s variables in a spatio-temporal graph-structured format, related encoder/decoder/processor fashions, and loss features. For that reason, we usually discuss with CLRS as a “dataset and baseline generator” relatively than a person dataset.

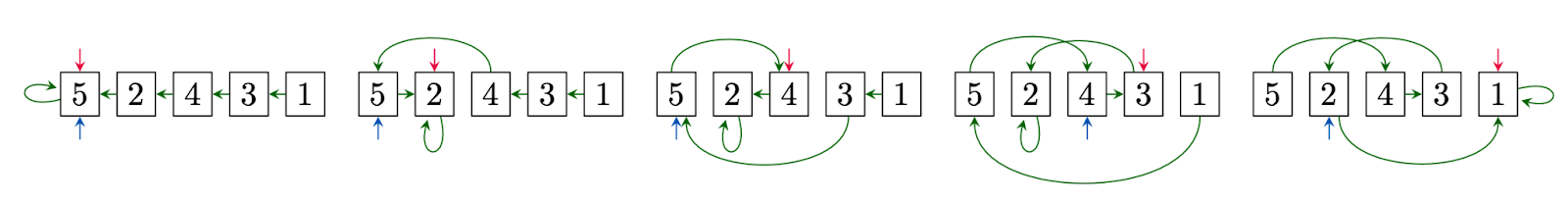

For instance, here’s a CLRS-produced trajectory for insertion sorting a listing [5, 2, 4, 3, 1]:

This trajectory totally exposes the interior state of the algorithm: how the listing’s pointers (in inexperienced) change over time, which aspect is at present being sorted (in purple), and the place it must be sorted into (in blue). By default, these can be utilized for step-wise supervision, though just lately extra fascinating methods to make use of the trajectories, similar to Trace-ReLIC [21], had been proposed.

Why cease at simply one algorithm? May we study all thirty?

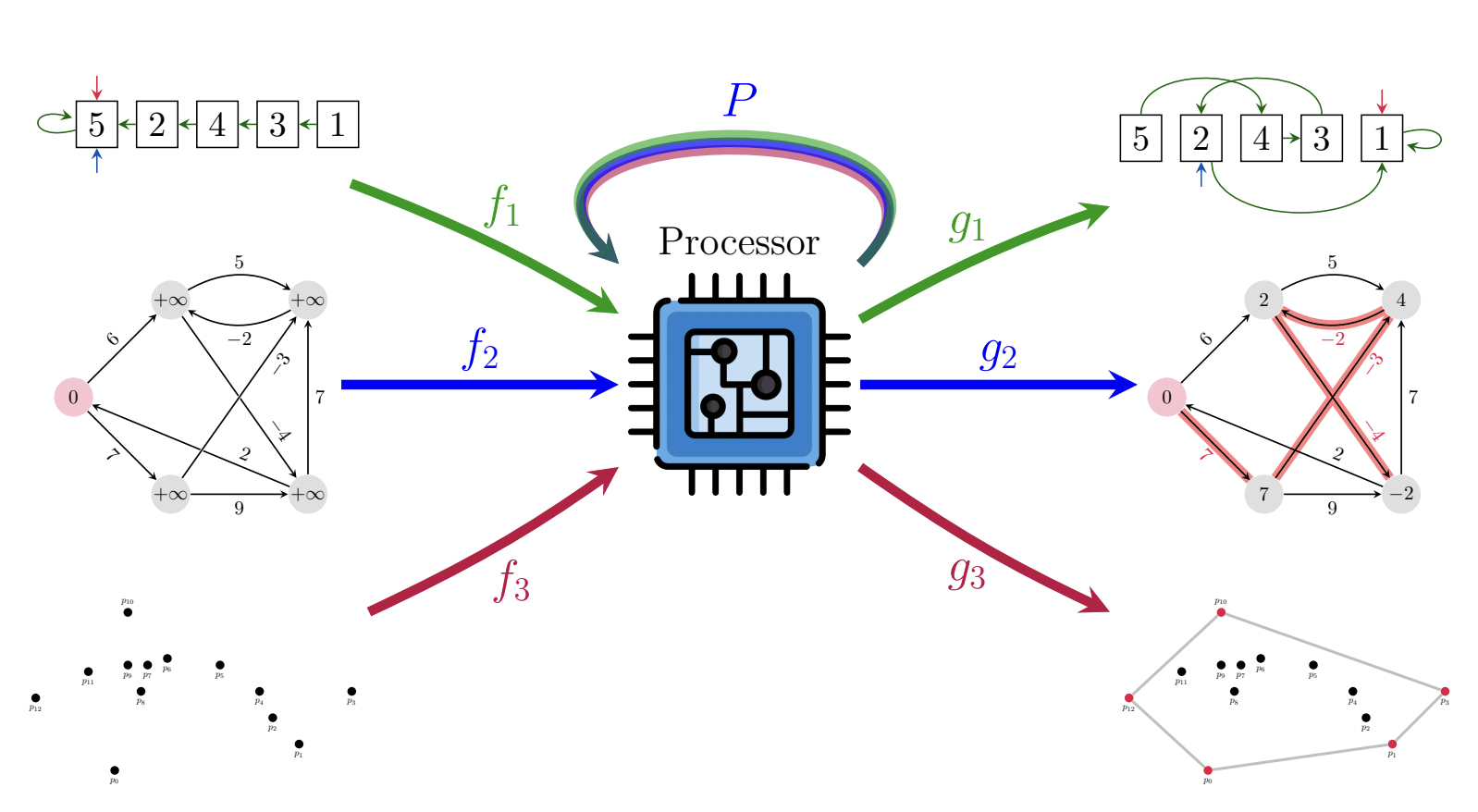

As talked about, CLRS-30 converts thirty various algorithms right into a unified graph illustration. This paves the way in which for an apparent query: might we study one processor (with a single set of weights) to execute all of them? To be clear, we envision a NAR processor like this:

That’s, a single (G)NN, able to executing sorting, path-finding, convex hull discovering, and all different CLRS algorithms. Because the enter and output dimensionalities can range wildly between the completely different algorithms, we’d nonetheless suggest utilizing separate encoders and decoders for every algorithm—mapping into and out of the processor’s latent area—nonetheless, we intentionally hold these as linear features to position nearly all of the computational effort on P.

Nevertheless, regardless of the bottom concept being easy in precept, this proves to be no trivial endeavour. Most prior makes an attempt to study a number of algorithms, similar to NEGA [7] or its successor, NE++ [45], have intentionally focussed on studying to execute extremely associated algorithms, the place the training indicators are prone to be well-correlated. Accordingly, our preliminary single-processor multi-task coaching runs on all of CLRS-30 resulted in NaNs.

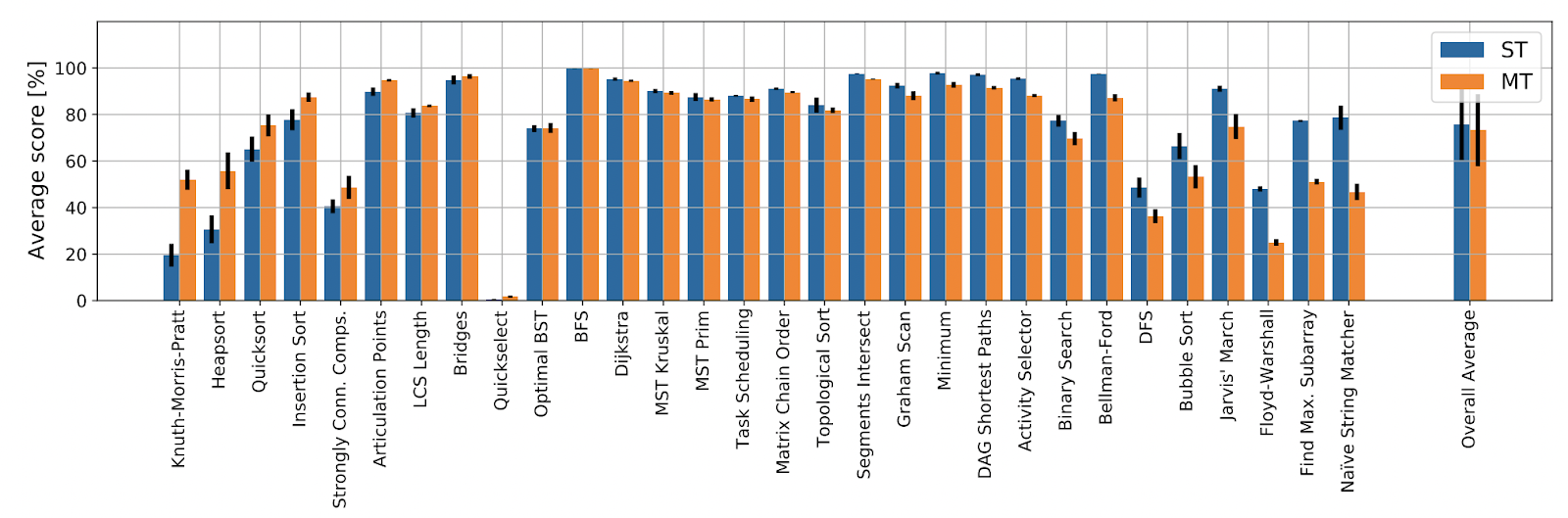

We’ve got been in a position to determine single-task instabilities as the principle offender of this: if the gradients for any particular person algorithmic activity are noisy or unstable, this interprets to unstable coaching on all thirty of them. Therefore, we launched a devoted “mini-strike” of two months to determine and repair studying stability points in single-task learners. Our ensuing mannequin, Triplet-GMPNN [46], improves absolute imply efficiency over CLRS-30 by over 20% from prior state-of-the-art, and allows profitable multi-task coaching! What’s extra, we now have a single generalist Triplet-GMPNN that, on common, matches the thirty specialist Triplet-GMPNNs, evaluated out-of-distribution:

Whereas it’s evident from this plot that we nonetheless have an extended approach to go earlier than we produce a completely “algorithmic” NAR processor library, this consequence has been seen as a big “compression” milestone, just like the Gato [47] paper. The discharge of our Triplet-GMPNN mannequin sparked extraordinarily fascinating discussions on Twitter, Reddit and HackerNews, particularly in gentle of its implications to developing generally-intelligent techniques. Typically, the progress in NAR made by varied teams over simply the previous three years has been unimaginable to watch. And we’re simply getting began: now that we are able to, in precept, construct generalist NAR processors, I’m immensely excited in regards to the potential this holds for future analysis and merchandise.

Wish to know extra?

For sure, there’s rather a lot of fabric this text doesn’t cowl—particularly, the technical and implementation particulars of most of the papers mentioned herein. If what you’ve learn right here has made you to know extra, and even play with NAR fashions your self, I like to recommend you try the LoG’22 Tutorial on NAR (https://algo-reasoning.github.io/), which I delivered alongside Andreea Deac and Andrew Dudzik. Over the course of just below three hours, we cowl all the concept wanted to grasp the foundations of growing, deploying, and deepening neural algorithmic reasoners, together with plentiful code pointers and references. And naturally, you might be greater than welcome to succeed in out immediately, ought to you’ve any follow-up questions, fascinating factors of debate, and even fascinating concepts for tasks!

References

- TH. Cormen, CE. Leiserson, RL. Rivest, and C. Stein. Introduction to Algorithms. MIT Press’22.

- Ok. Xu, J. Li, M. Zhang, SS. Du, Ok-I. Kawarabayashi, and S. Jegelka. What Can Neural Networks Cause About?. ICLR’20.

- P. Veličković. The whole lot is Linked: Graph Neural Networks. Present Opinion in Structural Biology’23.

- R. Bellman. On a Routing Drawback. Quarterly of Utilized Arithmetic’58.

- R. Bellman. Dynamic Programming. Science’66.

- P. Veličković, G. Cucurull, A. Casanova, A. Romero, P. Liò, and Y. Bengio. Graph Consideration Networks. ICLR’18.

- P. Veličković, R. Ying, M. Padovano, R. Hadsell, and C. Blundell. Neural Execution of Graph Algorithms. ICLR’20.

- JB. Hamrick, KR. Allen, V. Bapst, T. Zhu, KR. McKee, JB. Tenenbaum, and PW. Battaglia. Relational inductive biases for bodily development in people and machines. CCS’18.

- Ok. Xu*, W. Hu*, J. Leskovec, and S. Jegelka. How Highly effective are Graph Neural Networks?. ICLR’19.

- Ok. Xu, M. Zhang, J. Li, SS. Du, Ok-I. Kawarabayashi, and S. Jegelka. How Neural Networks Extrapolate: From Feedforward to Graph Neural Networks. ICLR’21.

- J. Uesato*, N. Kushman*, R. Kumar*, F. Track, N. Siegel, L. Wang, A. Creswell, G. Irving, and I. Higgins. Fixing math phrase issues with process- and outcome-based suggestions. arXiv’22.

- H. Lightman*, V. Kosaraju*, Y. Burda*, H. Edwards, B. Baker, T. Lee, J. Leike, J. Schulman, I. Sutskever, and Ok. Cobbe. Let’s Confirm Step by Step. arXiv’23.

- A. Graves, G. Wayne, and I. Danihelka. Neural Turing Machines. arXiv’14.

- A. Graves, G. Wayne, M. Reynolds, T. Harley, I. Danihelka, A. Grabska-Barwińska, S. Gómez Colmenarejo, E. Grefenstette, T. Ramalho, J. Agapiou, A. Puigdomènech Badia, KM. Hermann, Y. Zwols, G. Ostrovski, A. Cain, H. King, C. Summerfield, P. Blunsom, Ok. Kavukcuoglu, and D. Hassabis. Hybrid computing utilizing a neural community with dynamic exterior reminiscence. Nature’16.

- A. Vaswani*, N. Shazeer*, N. Parmar*, J. Uszkoreit*, L. Jones*, AN. Gomez*, Ł. Kaiser*, and I. Polosukhin*. Consideration is all you want. NeurIPS’17.

- Ok. Freivalds, E. Ozoliņš, and A. Šostaks. Neural Shuffle-Change Networks – Sequence Processing in O(n log n) Time. NeurIPS’19.

- H. Tang, Z. Huang, J. Gu, B-L. Lu, and H. Su. In direction of Scale-Invariant Graph-related Drawback Fixing by Iterative Homogeneous GNNs. NeurIPS’20.

- P. Veličković, L. Buesing, MC. Overlan, R. Pascanu, O. Vinyals, and C. Blundell. Pointer Graph Networks. NeurIPS’20.

- H. Strathmann, M. Barekatain, C. Blundell, and P. Veličković. Persistent Message Passing. ICLR’21 SimDL.

- B. Bevilacqua, Y. Zhou, and B. Ribeiro. Measurement-invariant graph representations for graph classification extrapolations. ICML’21.

- B. Bevilacqua, Ok. Nikiforou*, B. Ibarz*, I. Bica, M. Paganini, C. Blundell, J. Mitrovic, and P. Veličković. Neural Algorithmic Reasoning with Causal Regularisation. ICML’23.

- A. Dudzik*, and P. Veličković*. Graph Neural Networks are Dynamic Programmers. NeurIPS’22.

- A. Dudzik, T. von Glehn, R. Pascanu, and P. Veličković. Asynchronous Algorithmic Alignment with Cocycles. ICML’23 KLR.

- A. Davies, P. Veličković, L. Buesing, S. Blackwell, D. Zheng, N. Tomašev, R. Tanburn, P. Battaglia, C. Blundell, A. Juhász, M. Lackenby, G. Williamson, D. Hassabis, and P. Kohli. Advancing arithmetic by guiding human instinct with AI. Nature’21.

- A. Davies, A. Juhász, M. Lackenby, and N. Tomašev. The signature and cusp geometry of hyperbolic knots. Geometry & Topology (in press).

- C. Blundell, L. Buesing, A. Davies, P. Veličković, and G. Williamson. In direction of combinatorial invariance for Kazhdan-Lusztig polynomials. Illustration Principle’22.

- L. Beurer-Kellner, M. Vechev, L. Vanbever, and P. Veličković. Studying to Configure Pc Networks with Neural Algorithmic Reasoning. NeurIPS’22.

- EW. Dijkstra. A observe on two papers in reference to graphs. Numerische Matematik’59.

- A. Derrow-Pinion, J. She, D. Wong, O. Lange, T. Hester, L. Perez, M. Nunkesser, S. Lee, X. Guo, B. Wiltshire, PW. Battaglia, V. Gupta, A. Li, Z. Xu, A. Sanchez-Gonzalez, Y. Li, and P. Veličković. ETA Prediction with Graph Neural Networks in Google Maps. CIKM’21.

- TE. Harris, and FS. Ross. Fundamentals of a technique for evaluating rail internet capacities. RAND Tech Report’55.

- M. Winkenbach, S. Parks, and J. Noszek. Technical Proceedings of the Amazon Final Mile Routing Analysis Problem. 2021.

- P. Veličković, and C. Blundell. Neural Algorithmic Reasoning. Patterns’21.

- A. Krizhevsky, I. Sutskever, and GE. Hinton. ImageNet Classification with Deep Convolutional Neural Networks. NeurIPS’12.

- Q. Cappart, D. Chételat, EB. Khalil, A. Lodi, C. Morris, and P. Veličković. Combinatorial optimization and reasoning with graph neural networks. JMLR’23.

- M. Vlastelica*, A. Paulus*, V. Musil, G. Martius, and M. Rolínek. Differentiation of Blackbox Combinatorial Solvers. ICLR’20.

- A. Deac, P. Veličković, O. Milinković, P-L. Bacon, J. Tang, and M. Nikolić. Neural Algorithmic Reasoners are Implicit Planners. NeurIPS’21.

- B. Wilder, E. Ewing, B. Dilkina, and M. Tambe. Finish to finish studying and optimization on graphs. NeurIPS’19.

- M. Garnelo, and WM. Czarnecki. Exploring the Area of Key-Worth-Question Fashions with Intention. 2023.

- Y. He, P. Veličković, P. Liò, and A. Deac. Steady Neural Algorithmic Planners. LoG’22.

- P. Veličković*, M. Bošnjak*, T. Kipf, A. Lerchner, R. Hadsell, R. Pascanu, and C. Blundell. Reasoning-Modulated Representations. LoG’22.

- D. Numeroso, D. Bacciu, and P. Veličković. Twin Algorithmic Reasoning. ICLR’23.

- JC. Paetzold, J. McGinnis, S. Shit, I. Ezhov, P. Büschl, C. Prabhakar, MI. Todorov, A. Sekuboyina, G. Kaissis, A. Ertürk, S. Günnemann, and BH. Menze. Complete Mind Vessel Graphs: A Dataset and Benchmark for Graph Studying and Neuroscience. NeurIPS’21 Datasets and Benchmarks.

- LR. Ford, and DR. Fulkerson. Maximal movement via a community. Canadian Journal of Arithmetic’56.

- P. Veličković, A. Puigdomènech Badia, D. Budden, R. Pascanu, A. Banino, M. Dashevskiy, R. Hadsell, and C. Blundell. The CLRS Algorithmic Reasoning Benchmark. ICML’22.

- L-PAC. Xhonneux, A. Deac, P. Veličković, and J. Tang. Methods to switch algorithmic reasoning information to study new algorithms?. NeurIPS’21.

- B. Ibarz, V. Kurin, G. Papamakarios, Ok. Nikiforou, M. Bennani, R. Csordás, A. Dudzik, M. Bošnjak, A. Vitvitskyi, Y. Rubanova, A. Deac, B. Bevilacqua, Y. Ganin, C. Blundell, and P. Veličković. A Generalist Neural Algorithmic Learner. LoG’22.

- S. Reed*, Ok. Żołna*, E. Parisotto*, S. Gómez Colmenarejo, A. Novikov, G. Barth-Maron, M. Giménez, Y. Sulsky, J. Kay, JT. Springenberg, T. Eccles, J. Bruce, A. Razavi, A. Edwards, N. Heess, Y. Chen, R. Hadsell, O. Vinyals, M. Bordbar, and N. de Freitas. A Generalist Agent. TMLR’22.

Creator Bio

Petar Veličković is a Workers Analysis Scientist at Google DeepMind, Affiliated Lecturer on the University of Cambridge, and an Affiliate of Clare Hall, Cambridge. Petar holds a PhD in Pc Science from the University of Cambridge (Trinity College), obtained beneath the supervision of Pietro Liò. His analysis issues geometric deep learning—devising neural community architectures that respect the invariances and symmetries in knowledge (a subject he is co-written a proto-book about).

Quotation

For attribution in educational contexts or books, please cite this work as

Petar Veličković, "Neural Algorithmic Reasoning", The Gradient, 2023.

BibTeX quotation:

@article{veličković2023nar,

creator = {Veličković, Petar},

title = {Neural Algorithmic Reasoning},

journal = {The Gradient},

12 months = {2023},

howpublished = {url{https://thegradient.pub/neural-algorithmic-reasoning/},

}

Source link

#Neural #algorithmic #reasoning

Unlock the potential of cutting-edge AI options with our complete choices. As a number one supplier within the AI panorama, we harness the ability of synthetic intelligence to revolutionize industries. From machine studying and knowledge analytics to pure language processing and pc imaginative and prescient, our AI options are designed to boost effectivity and drive innovation. Discover the limitless prospects of AI-driven insights and automation that propel your small business ahead. With a dedication to staying on the forefront of the quickly evolving AI market, we ship tailor-made options that meet your particular wants. Be part of us on the forefront of technological development, and let AI redefine the way in which you use and reach a aggressive panorama. Embrace the longer term with AI excellence, the place prospects are limitless, and competitors is surpassed.