We analyzed top 15 LLMs and their input/output pricing options along with their performance. LLM API pricing can be complex and depends on your preferred usage. If you plan to use:

| Owner | Model* | Input Price ($)** | Output Price ($)** | Context Length*** | Max Output Tokens |

|---|---|---|---|---|---|

Table 1. A comparison of various large language models, showcasing their input/output pricing, context length, and maximum output token limits.

*The ranking of models is based on the Rank (UB) metric in the Chatbot Arena LLM leaderboard.Rank (UB) is defined as one plus the number of models that are statistically better than the target model. Specifically, a model is considered statistically better than another if its lower-bound score exceeds the other’s upper-bound score within a 95% confidence interval. This method ensures a statistically reliable comparison of model performances. Though o3-mini is not yet the leader in the Chatbot Arena, we chose to place it at the top since it is the latest model and we expect it to outperform other models.

**Input and output prices are given per 1 Million tokens.

***The unit of context length is token.

Understanding LLM Pricing

Tokens: The Fundamental Unit of Pricing

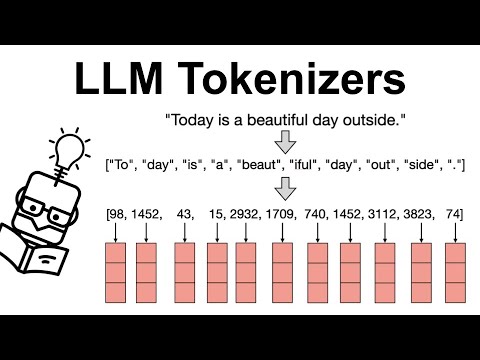

While providers offer a variety of pricing structures, per-token pricing is the most common. Tokenization methods differ across models; examples include:

- Byte-Pair Encoding (BPE): Splits words into frequent subword units, balancing vocabulary size and efficiency.

- Example: “unbelievable” → [“un”, “believ”, “able”]

- WordPiece: Similar to BPE but optimizes for language model likelihood, used in BERT.

- Example: “tokenization” → [“token”, “##ization”]. “token” is a standalone word; “##ization” is a suffix.

- SentencePiece: Tokenizes text without relying on spaces, effective for multilingual models like T5.

- Example: “natural language” → [” natural”, ” lan”, “guage”] or [” natu”, “ral”, ” language”].

Please note that the exact subwords depend on the training data and BPE/WordPiece process. To better understand these tokenization methods, watch the video below:

After grasping tokenization, an average price can be estimated based on project token length. Table 2 outlines token ranges by content type—such as UI prompts, email snippets, marketing blogs, detailed reports, and research papers—while noting that token counts vary across models. Once a model is chosen, its tokenizer can be used to have an idea of the average token count for the content.

| Content Type | Word Count Range (words) | Token Count Range (tokens) | Typical Enterprise Use Cases |

|---|---|---|---|

Table 2. Typical content types, their size ranges, and enterprise considerations (ranges are estimates and may vary).

Context Window Implications

Awareness of the context window concept is another crucial factor to consider regarding pricing. Here, it is essential to ensure that the total number of tokens from both the input and output does not exceed the context window/length. If the total exceeds the context window, it may lead to truncation of the excess output, as shown in Figure 2. Therefore, the output may not be as expected. It is important to note that tokens generated during the Reasoning process are also counted within this limitation.

Max Output Tokens

This is an important parameter in Large Language Models (LLMs) to achieve the desired output and manage costs effectively. While many documentations mention that it can be adjusted using the max_tokens parameter, it is crucial to review the documentation of the specific API being used to identify the correct parameter. It should be adjusted according to the specific needs:

If set too low: It may result in incomplete outputs, causing the model to cut off responses before delivering the full answer.

If set too high: Depending on the temperature (a parameter that controls response creativity) setting, it can lead to unnecessarily verbose outputs, longer response times, and increased cost.

Therefore, it is a parameter that requires careful consideration to optimize resource usage while balancing output quality, cost, and performance.

| Content Type | Input Prompt Example | Input Token Count* | Assumed Output Token Count* |

|---|---|---|---|

Table 3. Example input prompts and estimated token counts per content type.

*This assumes that each model produces responses with an equal number of output tokens—although the token count for both input and output may vary depending on each model’s tokenization, the number has been kept constant here for each model.

The LLM API price calculator can be used to determine the total cost per model when generating the content types from Table 2 via the API using the sample prompts provided in Table 3, as well as for calculating costs for custom cases beyond the suggested content types.

LLM Pricing Overview

LLM API Price Calculator

Comparing Plans

Non-technical users may prefer to use the UI rather than the API, here is the UI pricing:

| Product | Plan | Price | Key Features | Notable Limitations |

|---|---|---|---|---|

Using Multiple Language Models

A tool like OpenRouter allows the same prompt to be sent simultaneously to multiple models. The responses, token consumption, response time, and pricing can then be compared to determine which model is most suitable for the task.

Benefits and Challenges

- Increased Adaptability and Efficiency: Orchestration enhances responsiveness, allowing for real-time assessment of model efficiency, leading to the identification of a cost-effective model and potential savings.

- Prompt Sensitivity and Optimization: Identical prompts can elicit vastly different outputs across models, necessitating prompt engineering tailored to each model to achieve desired results, adding to development and maintenance complexity.

FAQ

What is LLM API Pricing?

Accessing Large Language Models (LLMs) via an Application Programming Interface (API) grants you remote access to AI models. This access is subject to a fee, often called an “API fee,” charged by the service provider. This fee is a critical consideration when integrating LLMs into your applications. It essentially represents the cost associated with each query, request, or task performed through the provider’s API. Because pricing structures can vary widely – based on factors like token usage, API call volume, feature utilization, or subscription models – understanding how providers calculate these costs is essential. With this knowledge, you can make well-informed decisions, selecting the LLM model and provider that best balance your performance needs, desired functionality, and budgetary limitations.

Why is LLM API pricing complex?

LLM API pricing can be complex due to factors like token consumption, context length, and model choice. Tokenization procedures vary across models, with some using Byte-Pair Encoding (BPE), WordPiece, or SentencePiece—each influencing how text is split into tokens and impacting cost efficiency. Understanding these differences helps optimize API usage and pricing.

What factors determine the cost of using a large language model (LLM)?

LLM costs are primarily determined by token usage (both input and output), API call volume, and the specific pricing model (e.g., per-token, subscription).

How can I compare pricing across different LLM models?

Compare input and output token prices, context window limits, and any additional fees. Tools like OpenRouter allow you to send the same prompt to multiple models and directly compare their results, token usage, speed, and pricing. Consider your typical content length and usage patterns to estimate overall costs.

What is the difference between input tokens and output tokens?

Input tokens are the tokens in the prompt you send to the LLM, while output tokens are the tokens in the generated response. For reasoning models, it’s important to note that tokens generated during the reasoning process itself are also counted as output tokens, impacting the final cost. Both input and output contribute to the overall cost.

How does the text volume I request affect the processing response time and overall budget when using an LLM API?

Larger text requests require more processing, increasing response time and costs. Optimize input sizes and use an LLM API pricing calculator to estimate token counts and manage your budget effectively.

What resources are available to the LLM community to support understanding and optimizing LLM pricing information?

The LLM community has developed various tools and benchmarks to help users understand and optimize LLM pricing. These resources often include calculators and comparison charts that offer insights into the power and efficiency of different models. Platforms like Hugging Face and GitHub host tools and code developed by the community to analyze model performance and costs. Many services offer community support through forums or chat features.

External Links

Source link

#Major #Providers #Comparison

“>

“>